Hi! I’m Baoxiong, a research scientist at BIGAI. I received my Ph.D in the Department of Computer Science, University of California, Los Angeles (UCLA). My research interests lie in the intersection of computer vision, artificial intelligence, robotics and cognitive science, with a special focus on spatial/temporal reasoning and its application to acting and planning in real world (scene/activity understanding, future prediction, grounded manipulation, etc.). My recent works focus on integrating all my previous research into humanoid robots and make them helpful when I’m old :-)

Previously, I obtained my M.S. from UCLA in 2019 and B.S. from Peking University in 2018.

Info: Email / Google Scholar / CV (May 2026) / Follow @BaoxiongJ

News

- New2026/06 Invited keynote talk at CVPR 2026 H2R Workshop, you can find the slides here.

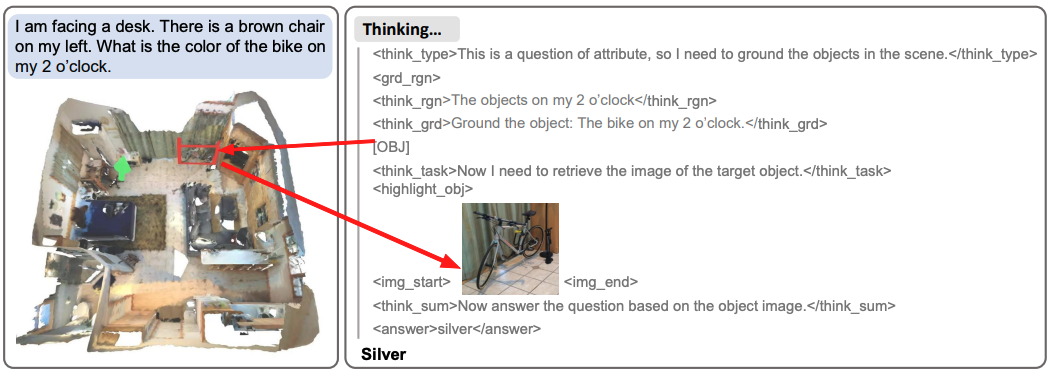

- New2026/05 Two papers on latent aciton for VLA (LARA) and RL post-training for 3D-VLM (3D-RFT) are accepted by ICML 2026.

- New2026/04 Our generalist motion tracker OmniXtreme is accepted by RSS 2026, check it out!

- New2026/04 Invited talk at China3DV 2026 Youth Forum.

- New2026/04 I’m co-organizing the 6th 3D Scene Understanding workshop at CVPR 2026. See you in Denver!

- New2026/04 The newest work of our SceneVerse is accepted by CVPR 2026, check out the ScenVerse++ project!

- New2026/03 UniFP has been selected as one of the Top-10 breakthroughs of 2025 by EAI-100.

- New2026/02 Our paper COLA on Human-Humanoid Collaboration is accepted by ICRA 2026, check it out!

- New2026/02 Our paper SceneCOT on CoT-Reasoning in 3D scenes is accepted by ICLR 2026, check it out!

- 2025/11 Invited tutorial at EIS 2025 hosted by ACM SIGEMBED China, checkout the slides!

- 2025/11 Invited talk at EAIRCon 2025 on 3D Gaussian World Models, checktout the slides!

- 2025/10 SceneWeaver receives the Best Paper at RoboGen@IROS25, checkout the slides and talk (EN)!

- 2025/10 We won the first place at the IROS 25 UniTree Dancing Challenge!

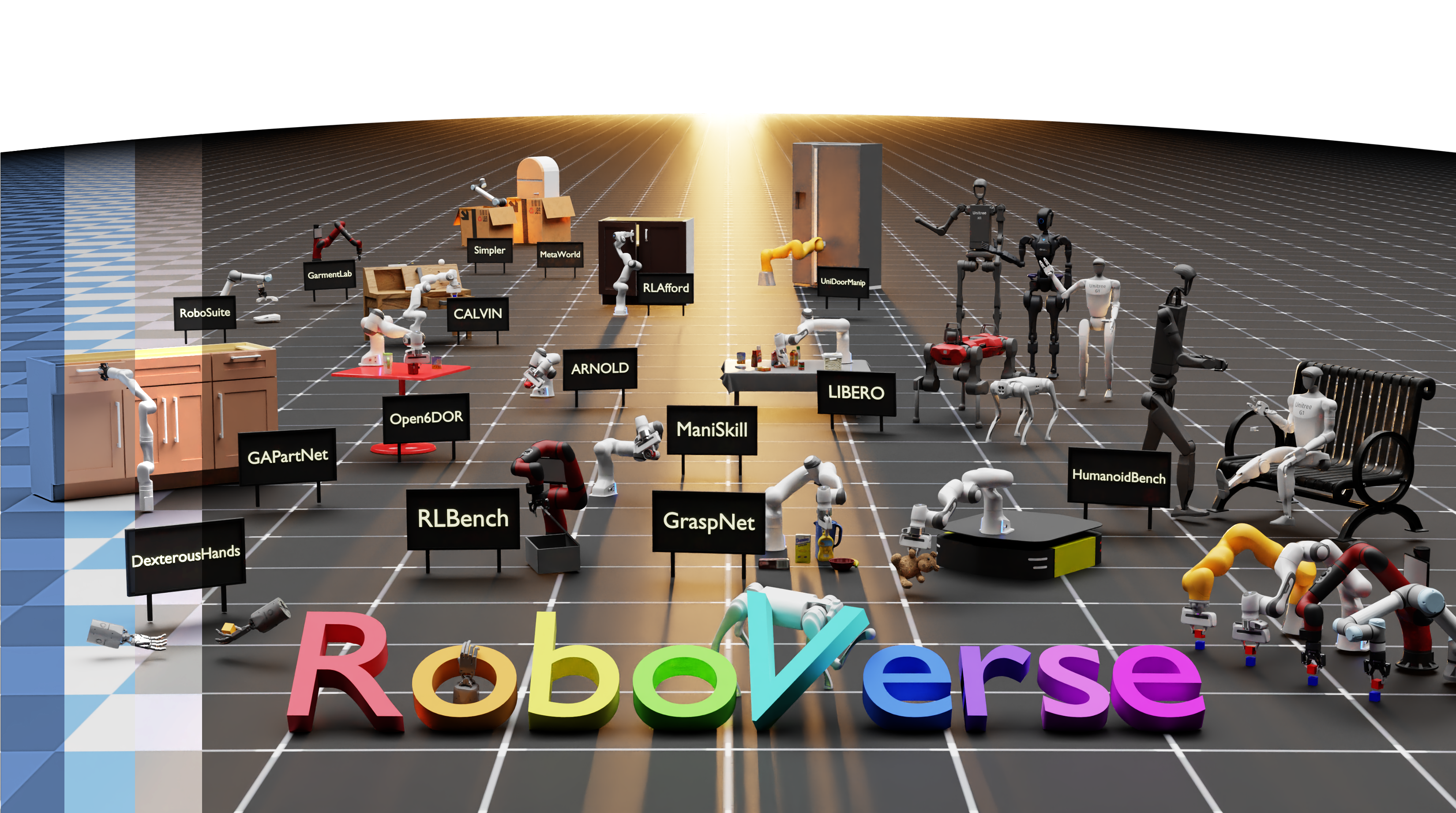

- 2025/10 RoboVerse receives the Best Open-source Award at RoboGen@IROS25!

- 2025/10 Invited talk at HKU and 3DCVer on UniFP and COLA, checktout the slides and talk (CN)!

- 2025/09 UniFP receives the Best Paper Award at CoRL 2025! Oral talk available here!

- 2025/09 One paper on Agentic 3D Scene Generation is accepted by NeurIPS 2025.

- 2025/08 We won the champion of humanoid dancing at World Humanoid Robot Games (WHRG)!

- 2025/06 One paper on Unified Force and Position Control is accepted by CoRL 2025 as Oral!

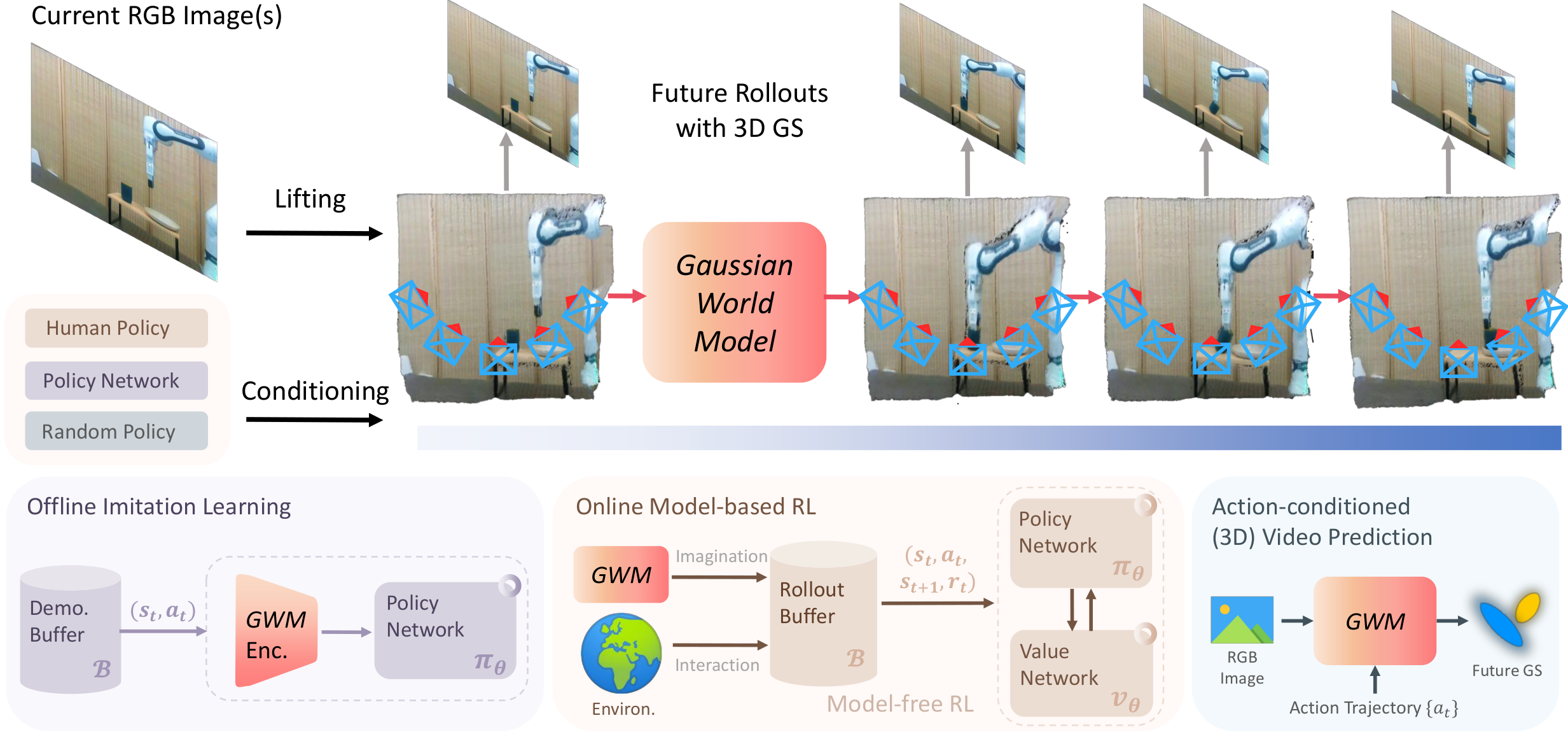

- 2025/06 Two papers on 4D World Model and Embodied Vision Language are accepted by ICCV 2025!

- 2025/06 I’m co-organizing the 5th 3D Scene Understanding workshop at CVPR 2025. See you in Nashvile!

- 2025/04 RoboVerse is accepted by RSS 2025! Go check it out here!

- 2025/03 I recently gave a summary of our work at BostonDynamics. Checktout the slides!

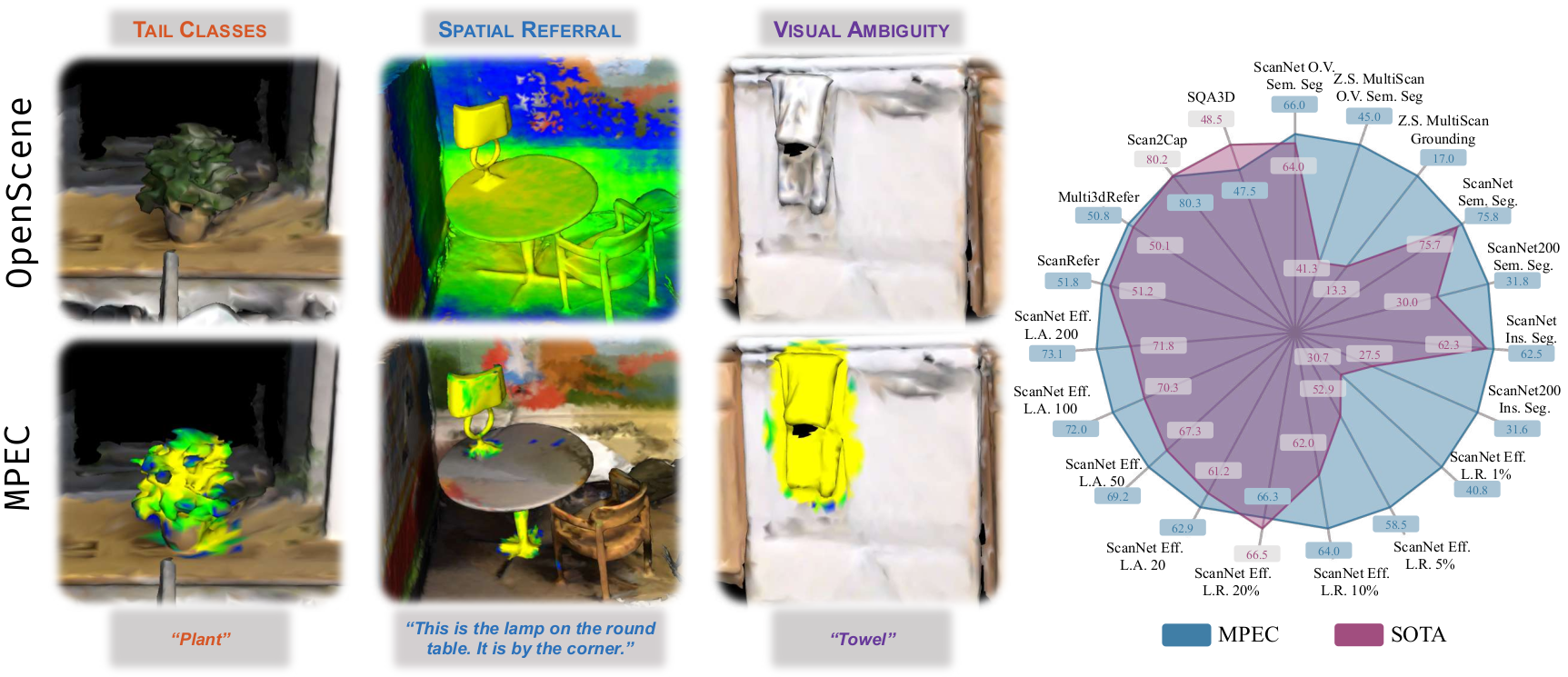

- 2025/02 Four papers on 3D Scene Understanding and Reconstruction are accepted by CVPR 2025!

- 2025/01 Two papers on Mobile Manipulation and Articulated Part Generation are accepted by ICRA 2025!

- 2025/01 One paper on Articulated Object Reconstruction is accepted by ICLR 2025!

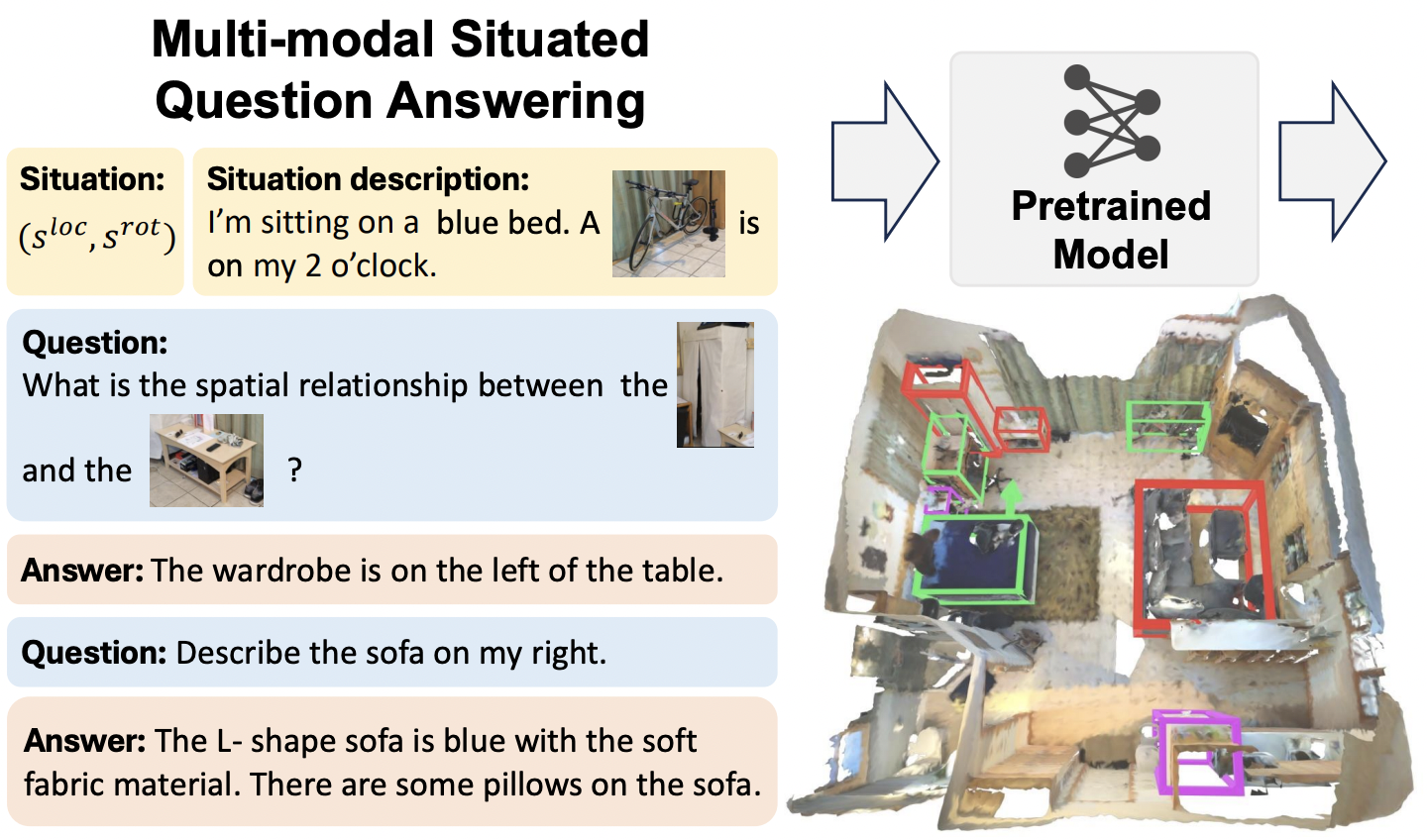

- 2024/12 One paper on Multi-modal 3D Situated Reasoning is accepted by NeurIPS 2024!

- 2024/10 I recently gave a summary of our work at BIGAI at ChinaGraph 2024. Checktout the slides!

- 2024/10 I will be attending ECCV 2024 this year, see you in Milan!

- 2024/07 I recently gave a talk on Embodied 3D Vision on ZhiDX. Checkout the slides!

- 2024/07 SceneVerse is accepted by ECCV 2024. Stay tuned for full data and model release at this link!

- 2024/07 Three papers on 3D-VL and Object-centric Learning is accepted by ECCV 2024.

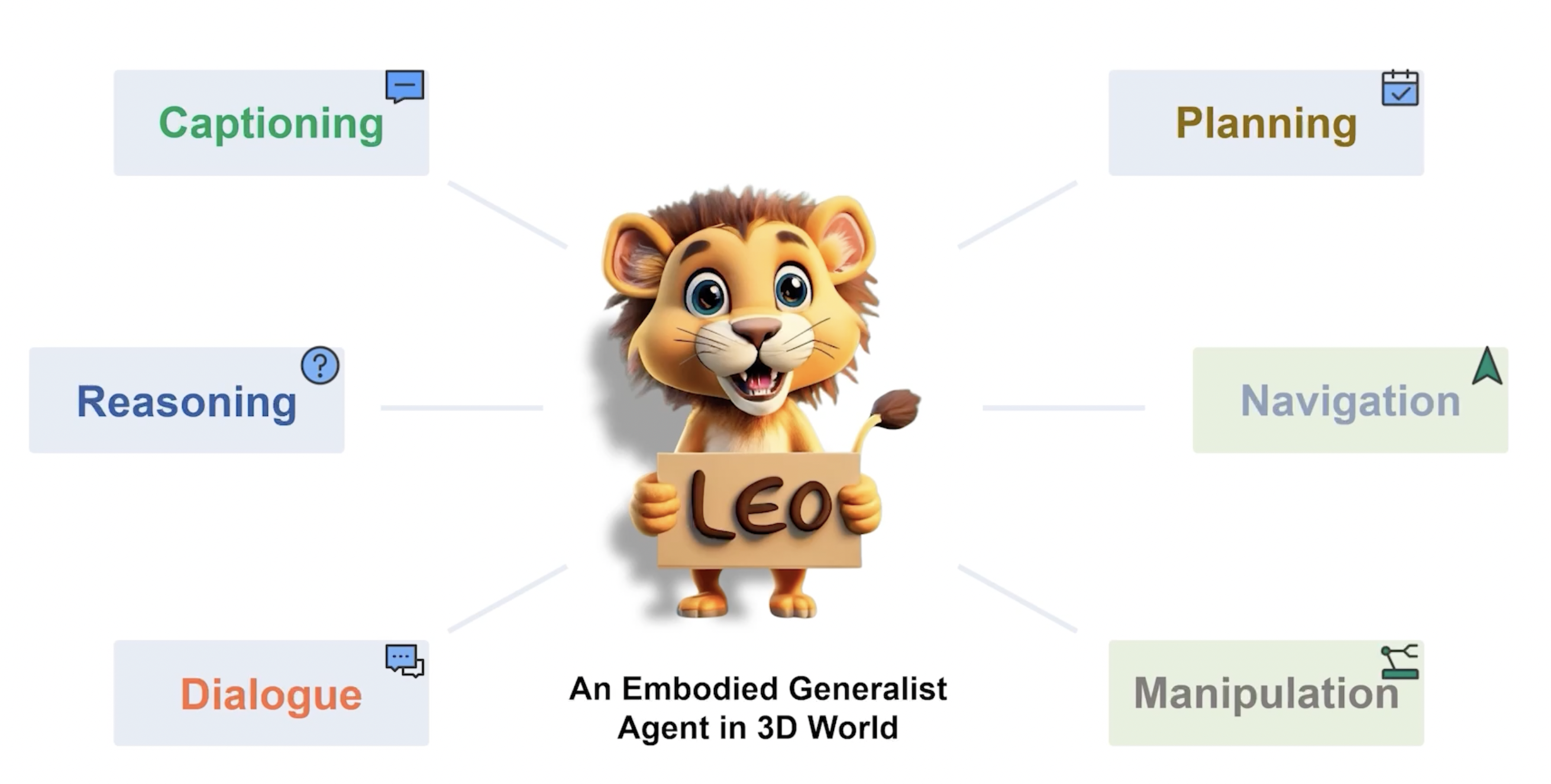

- 2024/06 Our embodied generalist LEO is accepted by ICML 2024. Check out our code and data at this link.

- 2024/06 I’m co-organizing the MANGO workshop at CVPR 2024. See you in Seattle!

- 2024/06 SceneVerse data is released ! Find the download link and instructions at this link.

- 2024/06 Two papers on 3D motion and scene generation accepted by CVPR 2024 as Highlight.

- 2024/03 LEO code and data is released ! Find the download link and instruction at this link.

- 2024/02 Announcing SceneVerse for 3D-VL learning. Checkout our the project page.

Selected Recent Publications (All publications)

LARA: Latent Action Representation Alignment for Vision-Language-Action Models

(* indicates equal contribution. ✉ indicates corresponding author. † indicates project lead.)

SceneWeaver: All-in-One 3D Scene Synthesis with an Extensible and Self-Reflective Agent

(* indicates equal contribution. ✉ indicates corresponding author. † indicates project lead.)

Closed-Loop Open-Vocabulary Mobile Manipulation with GPT-4V

(* indicates equal contribution. ✉ indicates corresponding author. † indicates project lead.)